QuiX Quantum, a developer of photonic quantum computing hardware, announced it has demonstrated “below threshold” error mitigation for the first time on a photonic quantum computer, suppressing physical qubit errors to the level compatible with scalable, fault‑tolerant quantum computing.

The project was conducted on the QuiX BiaTM Cloud Quantum Computing Service in collaboration with NASA’s Quantum Artificial Intelligence Laboratory, the University of Twente, and Freie Universität Berlin, and was partially funded by the Netherlands Ministry of Defense’s Purple NECtar Quantum Challenges initiative.

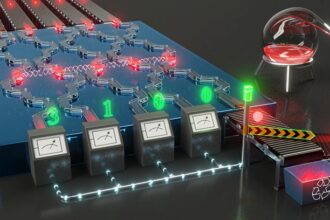

Photonic quantum computers use photons – particles of light – as their information carriers. The photons move around on an optical chip and entangle with each other because of their quantum particle statistics. However, the sources producing these particles are imperfect, and any path information inherent in the particles will destroy the entanglement, resulting in distinguishability errors.

Photon distillation is a hardware level, coherent technique for error reduction that improves the quality of single photons before computation. Using quantum interference among multiple imperfect photons, the method creates a cleaner, more indistinguishable photon without heavy qubit redundancy or classical post-processing.

Using a programmable 20‑mode photonic processor, the team demonstrated a photon distillation gate that makes photons measurably more alike, reducing photon indistinguishability error by a factor of 2.2. And despite additional noise introduced by the gate, the device still delivered a 1.2X net reduction in total error, demonstrating net‑gain mitigation.

The research also shows that combining photon distillation with quantum error correction may significantly reduce system level resource demands. Modeling with current photon source performance and photonic architectures, the approach could reduce the number of photon sources required per logical qubit by up to a factor of four, lowering system complexity and cost.

“This paper represents an important jump forward towards large-scale photonic quantum computing,” said David DiVincenzo, director of the Institute of Theoretical Nanoelectronics at the Peter Grünberg Institute at the Forschungszentrum Jülich.”

He continued, “By using a multimode optical Fourier transform, the authors have established experimentally an elegant photon distillation scheme that would significantly slash required resource costs in the future photonic quantum processor. This work takes a big step forward on one of the most stubborn bottlenecks in creating indistinguishable photons, giving a hint of a scalable path towards quantum fault tolerance.”

“For any quantum computer modality to scale, you have to prove you can remove more error than you add while the computer is still able to run, and that’s what we’ve shown here,” said Jelmar Renema, Chief Scientist at QuiX. “Our photon distillation gate is compatible with running real computations and delivers net gain error mitigation once all gate noise is included. That’s why this is a major achievement for photonics and quantum computing in general.”

The findings are described in a paper available at https://arxiv.org/abs/2601.05947 which is currently undergoing peer review.