Founded in 1976 and headquartered in North Carolina, SAS is one of the world’s largest privately held software companies and a long‑standing leader in advanced analytics, statistics, and decision‑making technology. Originating as an academic project in statistical analysis, SAS has grown into a large enterprise software company whose platforms are used across financial services, healthcare, government, manufacturing, and life sciences to manage, analyze, and operationalize complex data at scale.

Known for its deep investment in research and development and its emphasis on practical, production‑ready analytics, SAS has increasingly positioned quantum computing as a potential extension of its decades‑long work in optimization, modeling, and hybrid computational workflows.

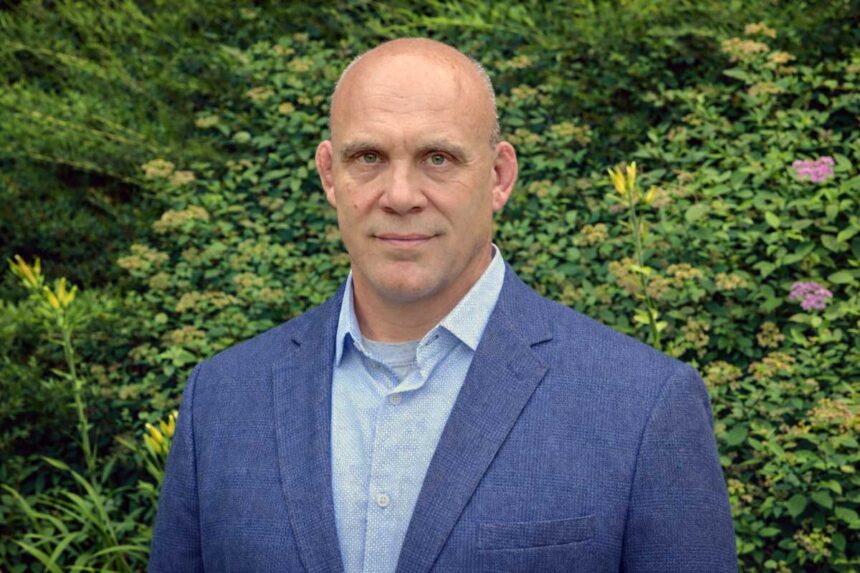

As Principal Quantum Systems Architect at SAS, Bill Wisotsky pioneered the use of quantum computing at the company and today sits at the intersection of theory, enterprise reality, and patient experimentation. We sat down with him for a candid, technically grounded, and deeply pragmatic chat of what quantum computing is today, how it fits into enterprise software, and how SAS is planning to use it as a tool in its vast toolkit.

A long relationship with quantum

Wisotsky’s engagement with quantum computing began decades earlier, during graduate school in the late 1990s and early 2000s, when quantum physics—and the then‑theoretical notion of quantum computation—first caught his attention. Quantum computing became, in his words, “a real hobby,” something he continued to follow alongside his work in professional services.

The pivot came during the COVID‑19 lockdowns. With normal routines suspended, Wisotsky began experimenting directly. IBM’s publicly accessible quantum computers provided a playground, and Wisotsky taught himself the software development kits, learning how real quantum workflows operated. It was during this period that a realization set in: quantum computing could align deeply with how SAS already approached analytics and optimization.

I started building a very rudimentary hybrid workflow. But it worked. It showed that it could happen.”

The preparation to make a case internally took over a year of sustained effort, during which Wisotsky had to ensure that things were indeed workable. He had to make sure that he “…wasn’t going to look like a fool after saying ‘yes we should do quantum computing’ and in the end it doesn’t do anything,” he admitted.

Selling quantum inside a corporation

That proof set the stage for a far more difficult challenge: convincing a large enterprise that quantum computing was workable. Quantum was not yet a revenue‑ready service, and the breakthrough came only after an extended internal search for an organizational home. That home turned out to be an internal incubation group called the Solutions Factory, where new ideas and solutions were tried and tested.

Under Alex Boakye’s executive sponsorship, quantum computing was reframed as an experimental capability worth testing. Wisotsky was confronted with choices around which modalities to pursue, and crucially, the early team assembled around Wisotsky was not composed of quantum physicists. They were optimization experts—people fluent in problem formulation, constraints, objectives, and classical solvers.

The team’s first experiments were largely futile, because early optimization tests showed no speedups. In some cases, quantum approaches failed even to find feasible solutions. In others, they worked, but took longer than classical approaches. The turning point came not from new hardware, but from a shift in mindset.

Wisotsky reached out to colleagues steeped in quantum physics rather than classical data science. The advice was to stop thinking like an optimizer and start thinking like a physicist. “That’s when things changed,” he said. “It’s not about small test problems. It’s about very large solution spaces.”

Once the team focused on massive combinatorial landscapes—problems where enumerating possibilities becomes intractable—quantum methods began to show value. Kidney exchange optimization and Nash equilibrium problems yielded strong results, enough to justify continued investment and eventually to catch the attention of SAS’s CTO Bryan Harris.

Harris encouraged the team to go further after seeing positive results; Wisotsky moved to R&D; and a product manager and developers were added to the team. Wisotsky no longer had to spend nights and weekends working on quantum—it was now his full-time job.

Modalities, tradeoffs, and hardware reality

SAS’s initial quantum work centered on quantum annealing because it offered immediate pragmatic advantages. Quantum annealers supported far more variables than universal quantum computers at the time. Their hybrid backends automatically decomposed large problems, shielding developers from hardware limitations. Even more importantly, annealing’s problem formulation closely mirrored classical optimization frameworks, providing an easier path forward and a shorter learning curve.

“You define objectives. You define constraints,” Wisotsky explained. “That made it much easier for optimization experts to adapt.” This alignment reduced cognitive load, accelerated experimentation, and enabled quicker demonstrations of value.

Today, SAS evaluates multiple quantum modalities simultaneously: superconducting qubits, trapped ions, neutral atoms, and quantum annealers. Each comes with tradeoffs in speed, in fidelity, and in how algorithms must be designed. Superconducting qubits, Wisotsky explained, offer very fast gate speeds, but at the cost of rigid qubit layouts. Entangling distant qubits requires additional gates, increasing circuit depth and noise.

“The more gates you have, the more probability you introduce noise,” he said.

By contrast, trapped ions and neutral atoms offer all‑to‑all connectivity, reducing the need for complex transpilation. Neutral atom systems add another unique capability: optical tweezers, which allow qubits themselves to be rearranged. “You can map the problem to the hardware—or move the hardware to the problem,” Wisotsky noted, using the traveling salesman problem as an example.

The correct choice depends on fidelity requirements, flexibility, runtime tolerance, and the structure of the problem itself. Currently, SAS works with not only IBM but also D-Wave and QuEra.

Quantum machine learning and the hybrid workflow

Quantum at SAS is never standalone: every application is deeply hybrid, tightly integrated with classical preprocessing, modeling, and validation.

Quantum reservoir computing, in particular, fits naturally into SAS workflows. Classical data undergoes extensive preprocessing, then encoding into quantum states using methods like angle or amplitude encoding. The quantum system performs a high‑dimensional transform, producing a richer feature space that feeds directly into classical machine learning models.

Importantly, preprocessing goals differ between classical and quantum models. Classical ML often seeks to eliminate correlated variables; quantum models can benefit from correlation, using entanglement to explore complex relationships. Misapplying classical instincts can therefore degrade quantum performance.

Wisotsky believes that pursuing “quantum advantage” as an abstract benchmark is impractical—it is better to pursue customer value. In kidney exchange optimization, for example, quantum methods delivered speed improvements, but speed alone was not the primary benefit. Quantum solvers also produced multiple high‑quality solutions, giving decision‑makers flexibility in dynamic environments where conditions change after deployment.

In machine learning, Wisotsky says that quantum is not currently faster, but where it shows promise is in expressivity and data efficiency, in training models with less data and uncovering a richer landscape of possibilities. He even hypothesizes that quantum‑derived models may prove more robust to data drift over time, though that theory remains under investigation.

Customers do not always arrive asking for quantum solutions. Sometimes they simply have problems that run too slowly or are too complex. In every case, SAS attempts the best possible classical solution first—not only to avoid unnecessary quantum costs, but to establish a meaningful baseline.

If it can be solved classically, we don’t use quantum.”

Quantum enters consideration when solution spaces explode combinatorially. Wisotsky likens it to having to find a lake in a vast mountain range armed only with a compass—classical methods must search blindly through exhaustive trial and error, rather than seeing the landscape all at once. Quantum search is equivalent to flying a helicopter over the terrain and seeing all valleys at once.

Quantum, AI, and the impossibility of predicting where it will go

Quantum computing will not replace AI or classical computing, but work in a hybrid manner for the foreseeable future. Even quantum neural networks rely on classical optimization. Large language models have not yet hit insurmountable classical limits; what quantum offers is acceleration, enrichment, and alternative representations instead of wholesale substitution.

However, early research into quantum language models hints at something intriguing: quantum correlations that differ fundamentally from those learned by classical transformers.

Two sectors stand out as quantum early adopters, according to Wisotsky: financial services and life sciences. Banks are investing aggressively, building internal quantum teams, test cases and intellectual property long before hardware becomes fully fault‑tolerant. The goal is readiness, so that when scalable quantum arrives, they can deploy immediately. Life sciences and healthcare, meanwhile, align directly with quantum’s original promise: modeling nature itself. Drug discovery, biologics, protein interactions, and medical imaging all stand to benefit as quantum hardware matures.

Wisotsky is skeptical of predictions, including his own. “I guarantee whatever I say won’t be true in ten years,” he said, invoking analogies to the early internet, personal computers, and the Wright brothers. He argues that QPUs will largely operate behind the scenes, orchestrated by CPUs alongside GPUs, invoked automatically for the hardest parts of the hardest problems. For Wisotsky, it is just another tool in the toolbox—one that sees the whole mountain range at once.