In 2025, John Martinis, along with John Clarke and Michel Devoret, won the Nobel Prize in Physics for their 1985 discovery of macroscopic quantum mechanical tunnelling and energy quantisation in an electric circuit. The discovery showed that quantum effects can manifest at observable scales and paved the way for the development of superconducting qubits, the most mature of today’s quantum computing modalities.

- Introduction and scientific motivation

- The 1985 experiment and macroscopic quantum behavior

- Quantum tunneling, temperature dependence, and energy levels

- From quantum energy levels to qubits and computation

- Applications: chemistry, materials, and optimization

- Building quantum computers and the quantum supremacy experiment

Martinis himself, following his breakthrough, has been involved in the practical development of superconducting quantum computers. Most notably, he joined Google Quantum AI in 2014, and carried out a widely hailed 2019 experiment often described as a demonstration of quantum supremacy. In 2022, he co-founded Qolab, a company focused on developing practical quantum technologies informed by the lessons of large‑scale experimental systems.

In a lecture during the National Quantum Federated Foundry (NQFF) Industry Day on the History of Superconducting Qubits, organised by Singapore’s National Quantum Office and the Agency for Science, Technology and Research (A*STAR), Martinis gave a detailed presentation on the development of superconducting quantum computers from 1985 to today.

Below is a condensed transcript of the first half of the lecture, where he discusses the physics of superconducting qubits, and the advancements and bottlenecks in quantum computing.

(The second half, which will be published separately, reveals Qolab’s roadmap and manufacturing plans, and is accompanied by a short interview with Martinis and Alan Ho, CEO and Co-Founder of Qolab.)

Introduction and scientific motivation

It is fascinating to consider that quantum mechanics—a subject physicists traditionally learn as a capstone of their training—can actually be used to store and manipulate information. I have been working in this area for about forty years now, and I hope to continue for several more decades.

Our work in 1985 expanded how quantum mechanics could be used. Specifically, we demonstrated that it is possible to build entirely new, “artificial atoms” and quantum devices—not from naturally occurring elements in the periodic table, but from macroscopic electrical components such as capacitors, inductors, transmission lines, and Josephson junctions. This expanded the set of fundamental building blocks available to physicists.

If electrical circuits can be engineered to obey quantum mechanics, and if those circuits resemble the kinds of electrical systems we already know how to design and fabricate, then it becomes possible to harness quantum phenomena in entirely new and powerful ways. In effect, we moved from studying quantum mechanics in naturally occurring microscopic systems to studying macroscopic “atoms” constructed from engineered electrical circuits. This opened the door to a new class of devices and, ultimately, to quantum computation.

The 1985 experiment and macroscopic quantum behavior

At the time, our 1985 experiment focused on macroscopic quantum tunneling. The device itself was about one centimeter across—clearly macroscopic by any standard. You could barely see the two wires connected to the Josephson junction. The purpose of the experiment was to determine whether such a macroscopic electrical object could obey quantum mechanics in the same way atoms do.

Initially, this was a fundamental physics experiment. We wanted to establish whether quantum mechanics could describe the behavior of an engineered macroscopic system. Over time, however, as many groups around the world continued this line of research, it became clear that these systems could be combined in novel ways to create highly sophisticated quantum devices, including quantum bits, or qubits, and ultimately quantum computers.

One of the central challenges in building such a system was that it had to be carefully engineered. Unlike atoms or molecules, which are provided by nature, these devices had to be designed from the ground up. Several critical issues had to be addressed early on.

The first issue involved noise. The quantum device was connected via wires to control electronics at room temperature. Thermal noise from the room-temperature environment could propagate down those wires and completely destroy the fragile quantum behavior of the system. In fact, the first version of the experiment failed entirely—it was a complete disaster. This forced us to rethink the design.

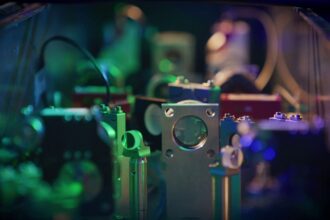

The solution involved introducing carefully designed filters between the room-temperature electronics and the quantum device. These filters attenuated microwave noise traveling down the wires. Since the device operated at microwave frequencies—around five gigahertz, comparable to cell phone frequencies—any uncontrolled noise at those frequencies would have overwhelmed the quantum signal.

The second challenge was that, at the time, microwave engineering was not well understood in this context. Today this seems obvious, but back then it was not. The system had to be designed explicitly as a microwave device, with transmission lines and impedance-controlled environments. By doing this, the device “looking out” into the external world experienced a well-defined and controllable electromagnetic environment.

The central element of the device was a superconducting Josephson junction. In a superconductor, electrical current can flow without any voltage appearing across the material, meaning there is no resistance and no energy dissipation. This is why MRI machines, for example, can sustain enormous magnetic fields for long periods using superconducting coils.

In a Josephson junction, as long as the current remains below a critical value, the device stays in a zero-voltage state. When the current exceeds that critical value, the junction switches into a voltage state, behaving more like a conventional resistor. The transition between these two states is what we measure experimentally.

Quantum tunneling, temperature dependence, and energy levels

Even before reaching the critical current, thermal fluctuations, electrical noise, or quantum mechanical effects can cause the junction to switch into the voltage state. This means the transition occurs at a slightly lower current than the classical prediction. By carefully measuring how and when this switching occurs, we can extract information about the underlying physics and determine whether quantum mechanics is governing the behavior.

In the experiment, current was applied from room temperature through a series of filters into the Josephson junction. We monitored the transition from the zero-voltage state to the voltage state as a function of current and temperature. The system was cooled from approximately one kelvin down to about twenty millikelvin. For reference, room temperature is about three hundred kelvin. Operating at such low temperatures is essential to suppress thermal noise that would otherwise disrupt quantum behavior.

At higher temperatures, the switching behavior could be explained using a Boltzmann factor, which depends exponentially on the barrier height divided by kT. As the temperature decreased, the effective temperature governing the switching behavior continued to drop. Eventually, the behavior stopped changing with temperature altogether. This temperature-independent behavior matched precisely what we calculated for quantum mechanical tunneling through the barrier.

This was a strong indication that the system was behaving quantum mechanically. However, an even more compelling demonstration involved observing discrete quantum energy levels.

To explain why energy levels are uniquely quantum mechanical, consider a simple example. If you pass current through a tungsten filament, as in an incandescent light bulb, it heats up and emits white light. White light contains a continuous spectrum of colors—red, blue, green, and everything in between.

In contrast, consider a neon sign. When electrical current passes through neon gas, the emitted light consists of distinct colors. These discrete spectral lines arise because neon atoms have specific, quantized energy levels. Light is emitted only when electrons transition between these levels, producing photons of well-defined energies and colors. This phenomenon was historically puzzling and ultimately required the development of quantum mechanics to explain.

In our system, we searched for analogous discrete transitions. We measured the rate at which the junction escaped from the zero-voltage state while applying microwaves at frequencies of around two gigahertz. As we varied the applied current, which in turn changed the system’s characteristic frequencies, we observed three distinct peaks in the escape rate.

These peaks corresponded to transitions between specific quantum energy levels: from the ground state to the first excited state, from the first to the second, and from the second to the third. By applying microwaves at the right frequencies, we enhanced the escape probability only at those specific transitions. This provided direct evidence that the system possessed discrete quantum energy levels.

From quantum energy levels to qubits and computation

Once these discrete energy levels were established, they could be used to encode information. This is where the concept of the qubit emerges. The lowest energy level can represent a logical zero, while the first excited state represents a logical one. Information can be encoded and manipulated by driving transitions between these states.

The fundamental difference between classical and quantum information lies in the principle of superposition. In a classical computer, a bit is either zero or one. In a quantum system, a qubit can exist in a superposition of zero and one simultaneously. Quantum mechanics allows operations that transform a definite state, such as zero, into a superposition state, such as zero plus one. This expands the instruction set beyond what is possible classically and provides new computational capabilities.

One way to understand the power of this is through parallelism. If a qubit is in a superposition of zero and one, and you apply an algorithm to it, the computation effectively processes both possibilities at once. With one qubit, this yields a factor-of-two parallelism. With two qubits, the system simultaneously represents four states. With three qubits, eight states, and so on. As the number of qubits increases, the number of parallel computational paths grows exponentially. At around fifty qubits, the system represents roughly 10¹⁶ states—enough to challenge the capabilities of classical supercomputers. At three hundred qubits, the number of states exceeds the number of atoms in the observable universe.

However, there is an important caveat. When the computation is complete, the system must be measured. Measurement collapses the quantum state, yielding only a classical bit string. This means that not every classical algorithm benefits from quantum computation. Algorithms must be carefully designed to extract useful information from the quantum system.

Applications: chemistry, materials, and optimization

From my perspective as a physicist, the most exciting applications of quantum computing are constructive rather than destructive. Quantum chemistry is a particularly compelling example. Simulating quantum mechanical systems on classical computers is extremely difficult. Quantum chemistry calculations, which attempt to model how atoms and molecules behave, become intractable as systems grow in size. Quantum computers, however, offer a natural mapping between the physical system being studied and the computational system performing the simulation.

Over the past forty years, researchers have developed algorithms that translate quantum chemistry problems into forms suitable for quantum computers. More recently, there has been growing interest in combining quantum computing with artificial intelligence and large foundational models to further enhance these capabilities.

One concrete application involves materials for electrification. Electric motors often rely on rare-earth magnets. While rare earth elements are not truly rare, they are limited in supply and can be environmentally costly to mine and process. Quantum computers could help identify alternative materials with similar magnetic properties but greater abundance or lower environmental impact.

Another important class of applications involves optimization problems. A simple example is the traveling salesman problem, which seeks the shortest route connecting multiple cities. While good classical algorithms exist for some versions of this problem, many real-world optimization problems involve complex constraints and large search spaces.

Research into quantum approaches for optimization is ongoing. While the mapping between a problem and quantum algorithm is not as direct as in quantum chemistry, the potential benefits are significant, and the field is advancing rapidly.

Building quantum computers and the quantum supremacy experiment

Today, there is a vibrant global ecosystem working on quantum computing. Many physical platforms are being explored, including superconducting circuits, trapped ions, neutral atoms, photons, semiconductors, diamond systems, and quantum annealers. This work is being pursued by large technology companies, startups, government laboratories, and academic institutions.

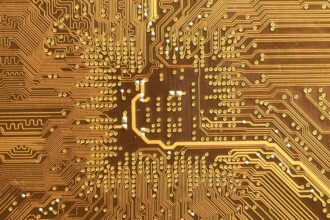

My focus has been on superconducting qubits. The simple Josephson junctions of the 1980s have evolved into highly sophisticated devices. A typical superconducting qubit consists of an engineered combination of capacitors and nonlinear inductors, forming a microwave resonator with carefully designed quantum properties.

Approximately seventy percent of the design effort involves microwave engineering. These devices are relatively large, easy to control, and fabricated using techniques similar to those used in the semiconductor industry. Once a design is optimized, it can be replicated across a chip to scale up the system.

This approach culminated in the development of the Google quantum supremacy chip. The device consisted of fifty-three qubits arranged in a two-dimensional array. Each qubit was coupled to its neighbors via tunable couplers that allowed interactions to be turned on and off.

The experiment involved performing a specific computational task: generating correlated random numbers across a quantum state space of 2⁵³ states, roughly 10¹⁶ possibilities. The results were compared against classical supercomputer simulations. The quantum processor completed the task far faster than any known classical method.

This experiment demonstrated that quantum computation could outperform classical computation for a well-defined problem. Importantly, the observed errors and noise matched theoretical predictions. No unexpected physics emerged to undermine the approach.

The system was housed in a dilution refrigerator, operating at millikelvin temperatures. The chip was mounted in a microwave package, with numerous control lines connecting it to room-temperature electronics. While the engineering is complex, it is fundamentally scalable.

The next steps involve improving qubit quality, reducing errors, refining fabrication techniques, and scaling to larger systems capable of error correction and practical computation. This is the path toward realizing a truly useful quantum computer.